Download the Excel file here: Data Cleaning with SQL

Download the SQL file here: Data Cleaning with SQL

**Zip Folder contains the SQL file as both an SQL file and Txt for easy

viewing by people who don't have SQL Server installed

Data preparation and cleaning is not a lot of fun. Data preparation is especially irritating when you have to repeat the task over and over again. While no one has come up with a data cleaning magic wand that I am aware of, there are some ways to automate the dreaded process.

While there are many software offerings out there that can help you with data cleaning, some are very expensive and have a high learning curve. So in this article I am going to focus on SQL Server. I chose SQL Server for the following reasons:

- SQL programming is vital skill to have if you want to work in data

- SQL Server readily available. Many companies have it. If you don’t have access to a current SQL Server, you can download the Express version for free.

The Problem

You work for a university and scheduling software is sorely out of date. The reporting capabilities are limited to 4 pre-canned reports. There is no money for an upgrade, but your boss is asking you for some reports that are not available from the system.

So you go to extract some data from the outdated software, but the program only lets you extract the following 4 columns (Start Date, Class, Professor, Classroom).

Let’s look at the data

Here is the data sample you pulled.

To effectively create the reports your boss is asking for, you need to add a few more data points.

Now, while these columns can be added manually, keep in mind a class schedule at a even a small university is at least 100 classes. And when you consider that you will have to repeat this process for every new semester, finding a way to automate this process will save you a lot of time in the end.

Step One – Get the data into SQL Server

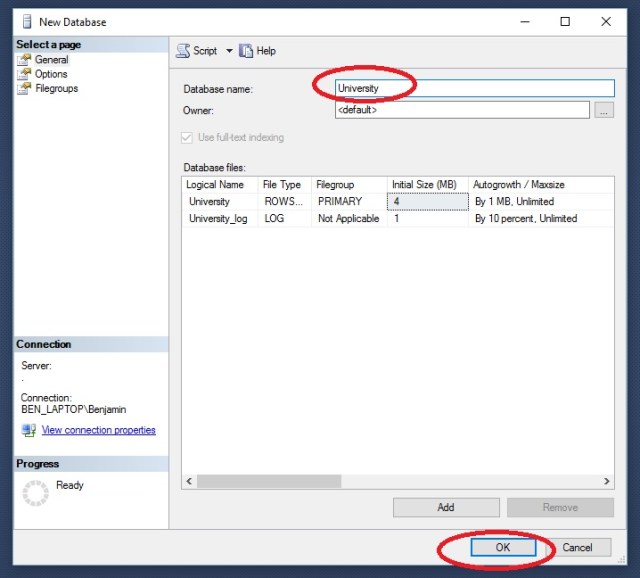

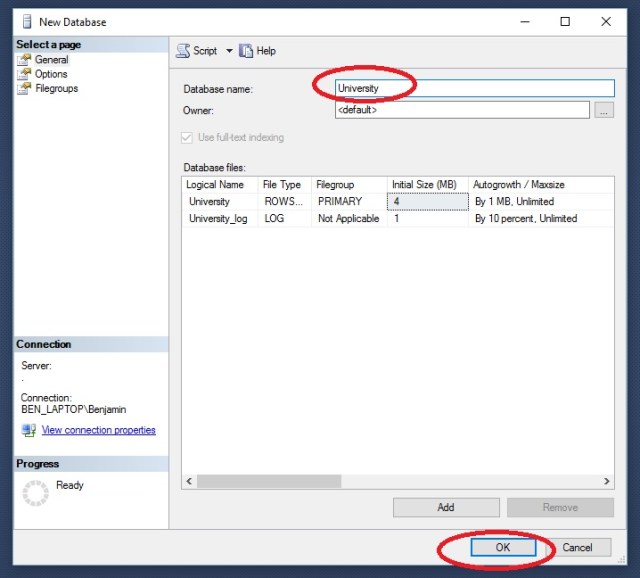

First, let’s create a new database to work with.

Open up SQL Server Management Studio > Object Explorer > right click > Databases > New Database

In the New Database window, give your new database the name University and click OK

Right click on your new database > Tasks > Import Data

On the Welcome to SQL Server Data Import and Export Wizard click Next

On Choose a Data Source, select Microsoft Excel from the drop down. Then browse to your downloaded Excel file: dataCleanWSQL.xlsx

Select SQL Server Native Client from the drop down. If your are working on the same machine as your SQL Server instance, you can just put a period “.” in Server Name. Otherwise you would need the server name. Database should be pre-filled with University.

Select Copy data from one or more tables or views and click Next

Take note of Destination name ([dbo].[Sheet1$]) and click Next

Click Next and Finish. You will watch the process. Note how many rows transferred. This should match up to your source data.

Back in the Object Browser go to University> Tables > dbo.Sheet1$. Right click and Select Top 1000 Rows.

Here is the output, showing the contents of your imported data

Step 2- Write the SQL Script

Load the SQL script by going to File>Open>File and choose DataCleanwSQL.SQL

While this is not an SQL tutorial, I will give a brief overview of the script file

**note GO in SQL indicates a complete block of code. This code will be completed before the script continues one to the next block of code. While it is not always needed, it is good coding practice

The first block of code: use University, simply tells SQL Server which database we are working with.

The next block: alter table, lets you make changes to an existing time. In this case, we are adding 3 column for out new data fields to go in (day, department, building).

The next block is an update block. In this block we set the value of our new column day by datename(dw, [start date]). This converts the datetime stamp from [start date] to a weekday name.

The next block is a Case Statement. This works like a nested If – Then -Else statement in many languages. In this block we are stating When the value of Class column is like ‘Calc%’ ( % is a wildcard in SQL) Then set the value of the department column to ‘Math’

The next code block is another Case statement. This one is using a between statement though, stating that When the Classroom column value is between 100 and 199 then set the value of Building to A

Finally

Select *

from dbo.sheet1$

displays the contents of the table.

Now we have our data the way we want it. You connect you BI tool to SQL Server to work with the data, you can export it as a CSV file, or you can just copy an paste it into Excel for further analysis.

4

4

Step 3: Repeat as needed

The great advantage the method above is that now we have a script we can run again over and over. So the next semester, when you download the new class listing, you don’t have to spend a lot of time on data prep. You simply have to import it into SQL Server and run your script.

4

4