Having a solid understanding of current public sentiment can be a great tool. When deciding if a new marketing campaign is being met warmly, or if a news release about the CEO is causing customers get angry, people in charge of handling a company’s public image need these answers fast. And in the world of social media, we can get those answers fast. One simple, yet effective, tool for testing the public waters is to run a sentiment analysis.

A sentiment analysis works like this. We take a bunch of tweets about whatever we are looking for (in this example we will be looking at President Obama). We then parse those tweets out into individual words and we count the number of positive words and compare it to the number of negative words.

Now the simplicity of this model misses out on some things. Sarcasm can easily missed. Ex. “Oh GREAT job Obama. Thanks for tanking the country once again”. Our model will count 2 positive words (Great and Thanks) and 1 negative word (tanking) giving us an overall score of positive 1.

There are more complex methods for dealing with the issue above, but you’ll be surprised at how good the system works all by itself. While, yes we are going to misread a few tweets, we have the ability to read thousands of tweets, so the larger volume of data negates the overall effect of the sarcastic ones.

First thing we need to do is go get a list of good and bad words. You could make your own up, but there are plenty of pre-populated lists on the Internet for free. The one I will be using is from the University of Illinois at Chicago. You can find the list here:

http://www.cs.uic.edu/~liub/FBS/sentiment-analysis.html

Once you go to the page, click on Opinion Lexicon and then download the rar file.

You can dowload from the link below, but I want you to know the source in case this link breaks.

Now open the rar file and move the two text files to a folder you can work from.

Next let’s make sure we have the right packages installed. For this we will need, TwitteR, plyr, stringr, and xlsx. If you do not have these packages installed, you can do so using the following code. (just change out TwitteR for whatever package you need to install)

install.packages("TwitteR")

Now load the libraries

library(stringr)

library(twitteR)

library(xlsx)

library(plyr)

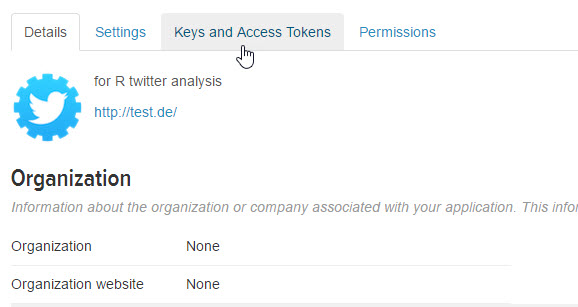

and connect to the Twitter API. If you do not already have a connection set up, check out my lesson on connecting to Twitter: R: Connect to Twitter with R

api_key<- "insert consumer key here"

api_secret <- "insert consumer secret here"

access_token <- "insert access token here"

access_token_secret <- "insert access token secret here

setup_twitter_oauth(api_key,api_secret,access_token,access_token_secret)

Okay, so now remember where you stored the text files we just downloaded and set that location as your working directory (wd). Note that we use forward slashes here, even if you are on a Windows box.

setwd("C:/Users/Benjamin/Documents")

neg = scan("negative-words.txt", what="character", comment.char=";")

pos = scan("positive-words.txt", what="character", comment.char=";")

scan looks through the text files and pulls words that start with characters and ignores comment lines that start with ;

You should now have 2 lists of positive and negative words.

You can add words to either list using a vector operation. Below I added wtf – a popular Internet abbreviation for What the F@#$@ to the negative words

neg = c(neg, 'wtf')

Okay, now here is the engine that runs our analysis. I have tried to comment on what certain commands you may not recognize do. I have lessons on most features listed here, and will make more lessons on anything missing. If I were to try to explain this step by step, this page would be 10000 lines long and no one would read it.

score.sentiment = function(tweets, pos.words, neg.words)

{

require(plyr)

require(stringr)

scores = laply(tweets, function(tweet, pos.words, neg.words) {

tweet = gsub('https://','',tweet) # removes https://

tweet = gsub('http://','',tweet) # removes http://

tweet=gsub('[^[:graph:]]', ' ',tweet) ## removes graphic characters

#like emoticons

tweet = gsub('[[:punct:]]', '', tweet) # removes punctuation

tweet = gsub('[[:cntrl:]]', '', tweet) # removes control characters

tweet = gsub('\\d+', '', tweet) # removes numbers

tweet=str_replace_all(tweet,"[^[:graph:]]", " ")

tweet = tolower(tweet) # makes all letters lowercase

word.list = str_split(tweet, '\\s+') # splits the tweets by word in a list

words = unlist(word.list) # turns the list into vector

pos.matches = match(words, pos.words) ## returns matching

#values for words from list

neg.matches = match(words, neg.words)

pos.matches = !is.na(pos.matches) ## converts matching values to true of false

neg.matches = !is.na(neg.matches)

score = sum(pos.matches) - sum(neg.matches) # true and false are

#treated as 1 and 0 so they can be added

return(score)

}, pos.words, neg.words )

scores.df = data.frame(score=scores, text=tweets)

return(scores.df)

}

Now let’s get some tweets and analyze them. Note, if your computer is slow or old, you can lower the number of tweets to process. Just change n= to a lower number like 100 or 50

tweets = searchTwitter('Obama',n=2500)

Tweets.text = laply(tweets,function(t)t$getText()) # gets text from Tweets

analysis = score.sentiment(Tweets.text, pos, neg) # calls sentiment function

Now lets look at the results. The quickest method available to us is to simply run a histogram

hist(analysis$score)

My results looks like this

If 0 is completely neutral most people are generally neutral about the president and more people have positives tweets then negatives ones. This is not uncommon for an outgoing president. They generally seem to get a popularity boost after the election is over.

Finally, if you want to save your results, you can export them to excel.

write.xlsx(analysis, "myResults.xlsx")

And you will end up with a file like this