So I received this message on Twitter early this morning. Always curious as to what else is out there, I went to the site and checked them out.

Link to website here: http://www.PHPSimplex.com/en/index.htm

I will say I was pleased with what I found. This is actually a great way to cover the next topic I had in mind.

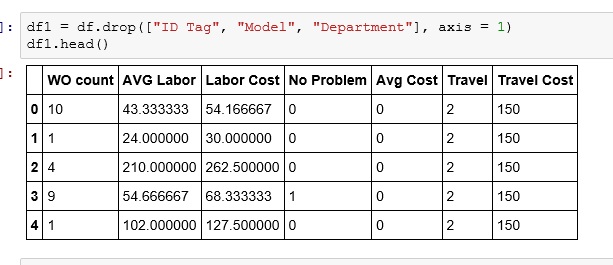

We have done a few Linear Programming models with Excel Solver already and I wanted to move on to show a bit more of the guts behind it. More advanced optimization tools don’t work off of spreadsheets, but instead require you to model your problem in a the form of a series of linear formulas.

Now if you check out the theory and examples section of the PHPSimplex website, they do have some good examples. But to better help you transition from spreadsheet to linear formulas, I am going to take an Excel Solver solution and show you how I would do it in PHPSimplex.

Below is a nice simple problem. We are building 2 types of furniture, tables and chairs. We are given the material and labor each item requires and the available amounts of each. We also have the profit per unit each item brings in. Our goal is to maximize profit. What we need to find out is how many of each item to build.

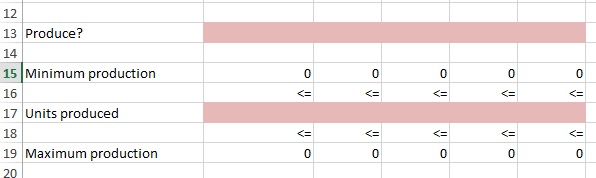

As you can see, this problem has already been solved using Excel Solver. Let’s see how we would approach this using PHPSimplex.

Go to the webite and click on the icon in the left corner or PHPSimplex in the menu bar.

I am going to select the Simplex/ Two Phases Method for this example

And based on our problem, I am going to choose 2 decision variables (my 2 pink changing cells) and two constraints (I will explain)

Now I am going to Maximize my function (I want max profits). Then I will set my function to 6X1+8X2 – The X1 and X2 represent my 2 changing cells. The 6 and 8 I get from my profit per unit found in my spread sheet.

So to put it in English : Max Profit = $6 * # of Tables Built + $8 * # of Chairs Built

Now our Constraints:

30X1 + 20X2 <= 300 – Materials – 30 * # Tables + 20 * # Chairs can’t exceed 300

5X1 + 10X2 <= 110 – Labor – 5 * # Tables + 10 * # Chairs can’t exceed 110

Now you can hit Continue and PHPSimplex will step you through the process or you can just hi Direct Solution to get an answer. Let’s do that.

If you compare t he results below with the results I got from above using Excel Solver you will see they are the same.

Bottom Line

This is just a quick glimpse at what PHPSimplex offers. It is a fun site with lots of great information. If you want to dig deep and really learn how the process works mathematically, this is the site for you.

I highly recommend visiting their site.

Here is the link again: http://www.PHPSimplex.com/en/index.htm